Vendor Risk Management Market: Industry Overview and Forecast

In today’s interconnected business ecosystem, organizations increasingly rely on third-party vendors to support operations, innovation, and growth. While these partnerships offer significant advantages, they also introduce a wide range of risks. Vendor Risk Management (VRM) provides a structured and systematic approach to identifying, assessing, monitoring, and mitigating risks associated with third-party relationships—helping organizations maintain resilience, compliance, and trust.

Click here for More: https://qksgroup.com/market-research/market-forecast-vendor-risk-management-2026-2030-worldwide-2144

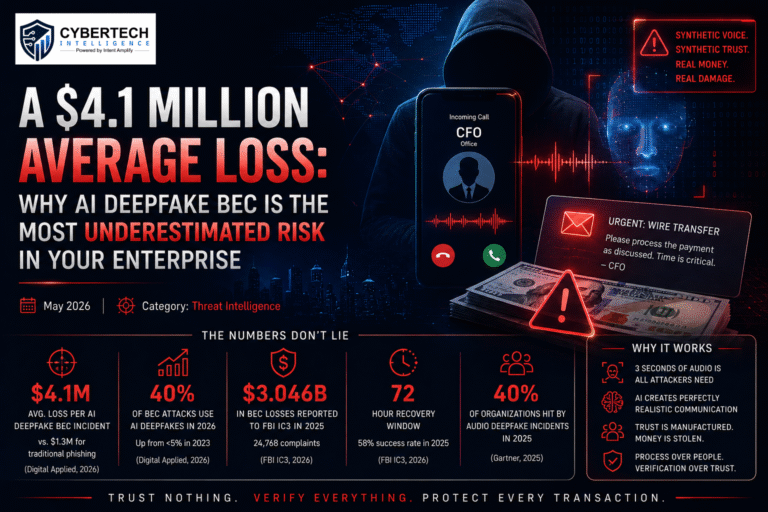

At its core, Vendor Risk Management focuses on protecting organizations from potential legal, reputational, financial, and cyber risks that may arise when engaging external partners. Vendors often have access to sensitive systems, applications, and data, making them an extended part of the organization’s security perimeter. A single weak link can expose businesses to data breaches, regulatory penalties, or operational disruptions. This is where modern VRM platforms play a critical role.

VRM platforms offer centralized visibility into third-party risk while ensuring alignment with regulatory requirements and industry standards. By automating assessments, documentation, and monitoring processes, these platforms reduce manual workloads and operational costs, enabling security and risk teams to focus on strategic initiatives. Automation also improves consistency and accuracy across vendor evaluations, eliminating fragmented processes and spreadsheets that traditionally slow down risk management efforts.

A comprehensive VRM lifecycle typically begins with vendor identification and onboarding. During this stage, organizations collect essential information about vendors, assess inherent risks, and perform due diligence checks. Once onboarded, vendors move into continuous monitoring, where their risk posture is regularly evaluated through questionnaires, performance reviews, security ratings, and compliance validations. This ongoing oversight ensures that emerging risks are detected early and addressed proactively.

As relationships evolve, VRM platforms help organizations reassess vendors based on changes in scope, access levels, or regulatory obligations. Finally, the lifecycle concludes with vendor termination and offboarding, ensuring access is revoked, data is securely handled, and contractual obligations are properly closed—reducing residual risk after the partnership ends.

Beyond risk reduction, effective Vendor Risk Management strengthens governance and accountability across the organization. It enables leadership to make informed decisions about third-party engagements, supports audit readiness, and enhances overall cyber resilience. In an era where supply chain attacks and third-party breaches are on the rise, VRM is no longer optional—it is a business imperative.

By adopting a robust VRM platform, organizations can gain end-to-end visibility into third-party risk, streamline workflows through automation, and build a secure, compliant vendor ecosystem that supports long-term growth.

Download Sample Report Here: https://qksgroup.com/download-sample-form/market-share-vendor-risk-management-2025-worldwide-2340

Key questions this study will answer:

At what pace is the Vendor Risk Management Market growing?

What are the key market accelerators and market restraints impacting the global Vendor Risk Management Market?

Which industries offer maximum growth opportunities during the forecast period?

Which global region expects maximum growth opportunities in the Vendor Risk Management market?

Which customer segments have the maximum growth potential for the Vendor Risk Management solution?

Which deployment options of Vendor Risk Management are expected to grow faster in the next 5 years?

Strategic Market Direction:

Vendor Risk Management (VRM) is increasingly becoming a strategic priority for businesses as they aim to manage the risks associated with their third-party relationships. It reflects the evolving nature of the business landscape. Organizations are increasingly recognizing the importance of implementing more proactive and comprehensive strategies to manage the risks associated with their vendor ecosystems, aiming for greater security, compliance, and resilience. This shift is integral in adapting to the changing risk landscape and ensuring a more robust and secure operational environment.

Vendors Covered:

IBM, ServiceNow, Mitratech, Metricstream, LogicGate, LogicManager, NAVEX, Ncontracts, OneTrust, Prevalent, ProcessUnity, Resolver, SAI360, Allgress, Aravo Solutions, Archer, Coupa Software, Diligent, Fusion Risk Management, Quantivate, SureCloud, Thirdpartytrust, Venminder.

Related Reports:

Market Forecast Vendor Risk Management, 2026-2030, USA: https://qksgroup.com/market-research/market-forecast-vendor-risk-management-2026-2030-usa-5569

Market Share: Vendor Risk Management, 2025, Latin America: https://qksgroup.com/market-research/market-share-vendor-risk-management-2025-latin-america-5447

#VendorRiskManagementMarket #ThirdPartyRiskManagementMarket #VRM #vendor #riskmanagement #security #VendorManagement #VendorRiskManagement #ThirdPartyRiskManagement #VendorRiskAssessment #ThirdPartyRiskManagementSoftware #ThirdPartyRiskManagement #ThirdPartyVendorManagement #ThirdPartyVendorRiskAssessment #ThirdPartyRiskAssessment #Cybersecurity #VRMPlatform #Business #Security #RiskManagement

In today’s interconnected business ecosystem, organizations increasingly rely on third-party vendors to support operations, innovation, and growth. While these partnerships offer significant advantages, they also introduce a wide range of risks. Vendor Risk Management (VRM) provides a structured and systematic approach to identifying, assessing, monitoring, and mitigating risks associated with third-party relationships—helping organizations maintain resilience, compliance, and trust.

Click here for More: https://qksgroup.com/market-research/market-forecast-vendor-risk-management-2026-2030-worldwide-2144

At its core, Vendor Risk Management focuses on protecting organizations from potential legal, reputational, financial, and cyber risks that may arise when engaging external partners. Vendors often have access to sensitive systems, applications, and data, making them an extended part of the organization’s security perimeter. A single weak link can expose businesses to data breaches, regulatory penalties, or operational disruptions. This is where modern VRM platforms play a critical role.

VRM platforms offer centralized visibility into third-party risk while ensuring alignment with regulatory requirements and industry standards. By automating assessments, documentation, and monitoring processes, these platforms reduce manual workloads and operational costs, enabling security and risk teams to focus on strategic initiatives. Automation also improves consistency and accuracy across vendor evaluations, eliminating fragmented processes and spreadsheets that traditionally slow down risk management efforts.

A comprehensive VRM lifecycle typically begins with vendor identification and onboarding. During this stage, organizations collect essential information about vendors, assess inherent risks, and perform due diligence checks. Once onboarded, vendors move into continuous monitoring, where their risk posture is regularly evaluated through questionnaires, performance reviews, security ratings, and compliance validations. This ongoing oversight ensures that emerging risks are detected early and addressed proactively.

As relationships evolve, VRM platforms help organizations reassess vendors based on changes in scope, access levels, or regulatory obligations. Finally, the lifecycle concludes with vendor termination and offboarding, ensuring access is revoked, data is securely handled, and contractual obligations are properly closed—reducing residual risk after the partnership ends.

Beyond risk reduction, effective Vendor Risk Management strengthens governance and accountability across the organization. It enables leadership to make informed decisions about third-party engagements, supports audit readiness, and enhances overall cyber resilience. In an era where supply chain attacks and third-party breaches are on the rise, VRM is no longer optional—it is a business imperative.

By adopting a robust VRM platform, organizations can gain end-to-end visibility into third-party risk, streamline workflows through automation, and build a secure, compliant vendor ecosystem that supports long-term growth.

Download Sample Report Here: https://qksgroup.com/download-sample-form/market-share-vendor-risk-management-2025-worldwide-2340

Key questions this study will answer:

At what pace is the Vendor Risk Management Market growing?

What are the key market accelerators and market restraints impacting the global Vendor Risk Management Market?

Which industries offer maximum growth opportunities during the forecast period?

Which global region expects maximum growth opportunities in the Vendor Risk Management market?

Which customer segments have the maximum growth potential for the Vendor Risk Management solution?

Which deployment options of Vendor Risk Management are expected to grow faster in the next 5 years?

Strategic Market Direction:

Vendor Risk Management (VRM) is increasingly becoming a strategic priority for businesses as they aim to manage the risks associated with their third-party relationships. It reflects the evolving nature of the business landscape. Organizations are increasingly recognizing the importance of implementing more proactive and comprehensive strategies to manage the risks associated with their vendor ecosystems, aiming for greater security, compliance, and resilience. This shift is integral in adapting to the changing risk landscape and ensuring a more robust and secure operational environment.

Vendors Covered:

IBM, ServiceNow, Mitratech, Metricstream, LogicGate, LogicManager, NAVEX, Ncontracts, OneTrust, Prevalent, ProcessUnity, Resolver, SAI360, Allgress, Aravo Solutions, Archer, Coupa Software, Diligent, Fusion Risk Management, Quantivate, SureCloud, Thirdpartytrust, Venminder.

Related Reports:

Market Forecast Vendor Risk Management, 2026-2030, USA: https://qksgroup.com/market-research/market-forecast-vendor-risk-management-2026-2030-usa-5569

Market Share: Vendor Risk Management, 2025, Latin America: https://qksgroup.com/market-research/market-share-vendor-risk-management-2025-latin-america-5447

#VendorRiskManagementMarket #ThirdPartyRiskManagementMarket #VRM #vendor #riskmanagement #security #VendorManagement #VendorRiskManagement #ThirdPartyRiskManagement #VendorRiskAssessment #ThirdPartyRiskManagementSoftware #ThirdPartyRiskManagement #ThirdPartyVendorManagement #ThirdPartyVendorRiskAssessment #ThirdPartyRiskAssessment #Cybersecurity #VRMPlatform #Business #Security #RiskManagement

Vendor Risk Management Market: Industry Overview and Forecast

In today’s interconnected business ecosystem, organizations increasingly rely on third-party vendors to support operations, innovation, and growth. While these partnerships offer significant advantages, they also introduce a wide range of risks. Vendor Risk Management (VRM) provides a structured and systematic approach to identifying, assessing, monitoring, and mitigating risks associated with third-party relationships—helping organizations maintain resilience, compliance, and trust.

Click here for More: https://qksgroup.com/market-research/market-forecast-vendor-risk-management-2026-2030-worldwide-2144

At its core, Vendor Risk Management focuses on protecting organizations from potential legal, reputational, financial, and cyber risks that may arise when engaging external partners. Vendors often have access to sensitive systems, applications, and data, making them an extended part of the organization’s security perimeter. A single weak link can expose businesses to data breaches, regulatory penalties, or operational disruptions. This is where modern VRM platforms play a critical role.

VRM platforms offer centralized visibility into third-party risk while ensuring alignment with regulatory requirements and industry standards. By automating assessments, documentation, and monitoring processes, these platforms reduce manual workloads and operational costs, enabling security and risk teams to focus on strategic initiatives. Automation also improves consistency and accuracy across vendor evaluations, eliminating fragmented processes and spreadsheets that traditionally slow down risk management efforts.

A comprehensive VRM lifecycle typically begins with vendor identification and onboarding. During this stage, organizations collect essential information about vendors, assess inherent risks, and perform due diligence checks. Once onboarded, vendors move into continuous monitoring, where their risk posture is regularly evaluated through questionnaires, performance reviews, security ratings, and compliance validations. This ongoing oversight ensures that emerging risks are detected early and addressed proactively.

As relationships evolve, VRM platforms help organizations reassess vendors based on changes in scope, access levels, or regulatory obligations. Finally, the lifecycle concludes with vendor termination and offboarding, ensuring access is revoked, data is securely handled, and contractual obligations are properly closed—reducing residual risk after the partnership ends.

Beyond risk reduction, effective Vendor Risk Management strengthens governance and accountability across the organization. It enables leadership to make informed decisions about third-party engagements, supports audit readiness, and enhances overall cyber resilience. In an era where supply chain attacks and third-party breaches are on the rise, VRM is no longer optional—it is a business imperative.

By adopting a robust VRM platform, organizations can gain end-to-end visibility into third-party risk, streamline workflows through automation, and build a secure, compliant vendor ecosystem that supports long-term growth.

Download Sample Report Here: https://qksgroup.com/download-sample-form/market-share-vendor-risk-management-2025-worldwide-2340

Key questions this study will answer:

At what pace is the Vendor Risk Management Market growing?

What are the key market accelerators and market restraints impacting the global Vendor Risk Management Market?

Which industries offer maximum growth opportunities during the forecast period?

Which global region expects maximum growth opportunities in the Vendor Risk Management market?

Which customer segments have the maximum growth potential for the Vendor Risk Management solution?

Which deployment options of Vendor Risk Management are expected to grow faster in the next 5 years?

Strategic Market Direction:

Vendor Risk Management (VRM) is increasingly becoming a strategic priority for businesses as they aim to manage the risks associated with their third-party relationships. It reflects the evolving nature of the business landscape. Organizations are increasingly recognizing the importance of implementing more proactive and comprehensive strategies to manage the risks associated with their vendor ecosystems, aiming for greater security, compliance, and resilience. This shift is integral in adapting to the changing risk landscape and ensuring a more robust and secure operational environment.

Vendors Covered:

IBM, ServiceNow, Mitratech, Metricstream, LogicGate, LogicManager, NAVEX, Ncontracts, OneTrust, Prevalent, ProcessUnity, Resolver, SAI360, Allgress, Aravo Solutions, Archer, Coupa Software, Diligent, Fusion Risk Management, Quantivate, SureCloud, Thirdpartytrust, Venminder.

Related Reports:

Market Forecast Vendor Risk Management, 2026-2030, USA: https://qksgroup.com/market-research/market-forecast-vendor-risk-management-2026-2030-usa-5569

Market Share: Vendor Risk Management, 2025, Latin America: https://qksgroup.com/market-research/market-share-vendor-risk-management-2025-latin-america-5447

#VendorRiskManagementMarket #ThirdPartyRiskManagementMarket #VRM #vendor #riskmanagement #security #VendorManagement #VendorRiskManagement #ThirdPartyRiskManagement #VendorRiskAssessment #ThirdPartyRiskManagementSoftware #ThirdPartyRiskManagement #ThirdPartyVendorManagement #ThirdPartyVendorRiskAssessment #ThirdPartyRiskAssessment #Cybersecurity #VRMPlatform #Business #Security #RiskManagement

0 Comments

0 Shares